One thing we’ve been seeing since the Iran Conflict started is the absolute tsunami of AI-generated garbage flooding every feed.

Fake videos, fabricated images, conspiracy theories spun up in seconds. People are rightfully pissed about it.

But there’s a battlefield running underneath all of that that most people scroll past. And that’s the changing shape of info war.

So what does this mean?

I’ve been watching it unfold in real time, and I want to share what I keep coming back to...

Observation 1: Fake news now costs nothing

When the cost of lying approaches zero, the structure of power itself inverts

Ten years ago, running a credible influence operation was expensive.

You needed resources, coordination, and cover. Nation-states could do it. Maybe a few well-funded groups. But it took real effort to make a lie land convincingly.

That’s completely over now. We have an industrial revolution of fake news, videos, and information. On a level that’s hard for both people and social media platforms to handle.

What changed:

Accounts and bots were spamming tons of fake video from AI tools like Gemini, Claude, and others within hours of the conflict starting

It takes zero effort to create a fake, but hours of hard work to prove it’s fake

Every real military movement can now be masked by thousands of synthetic ghost maneuvers

Today, generating a hyper-realistic fake image or video using AI tools has cut the time and cost drastically. A well-built influence op can use AI agents can spin up thousands of posts, accounts, and variations on a narrative faster than previous conflicts.

That’s a structural advantage for anyone who wants to lie. spin a narrative or just profit from speculation and misery. Cheap cost and speed create bad incentive. Hands power to arsonists.

You can spin up multiple accounts from one IP address. Like that Pakistani account that had 21 accounts generating fabricated OSINT videos and pictures.

But abundance of fakes creates a different problem entirely…

Observation 2. Velocity and variety as a weapon.

Information velocity is a weapon, but information variety makes it unmanageable.

Modern information warfare isn’t always about making you believe a specific lie. Sometimes the goal is just to drown you in it.

Two factors make this work:

Velocity: Truth is slow. It requires verification, context, and sourcing. Fabrication moves at the speed of the algorithm and AI bots. By the time a careful analyst has confirmed something is real, the narrative has already moved forward several times.

Variety: You’re not just getting one type of content. You’re getting a dizzying cocktail of AI-generated images, deepfake videos, out-of-context archival footage, hot takes, and vibes content all arriving simultaneously. No single deception needs to succeed. The cumulative cognitive load is the point.

Variety and velocity create a digital landfill so deep and wide that our mind defaults to tribalism just to survive the sensory overload. You stop looking for what is true and start looking for what feels familiar.

I’ve watched genuinely good OSINT accounts fall for it.

When you’re in a high-velocity social media culture, relevance is everything. The pressure to be first creates bad incentives. Mix in monetization and dopamine, and even careful people start pushing things they haven’t fully verified. You end up following geopol astrologers,

In many ways, I think the military intelligence and intelligence agencies have found a solution to those pesky online randos noticing things. Its just to simply bury them alive.

There’s an older deception principle at work here: hiding a tree in a forest.

You don’t need to hide a secret if you can surround it with ten thousand high-fidelity fakes. By the time an analyst spots the real signal, it’s been buried by the algorithm or drowned out by a million people arguing about the wrong thing.

But velocity and variety only work if they break something deeper than just attention…

Observation 3: The target is your capacity to reason, not only your beliefs

You don't have to win the argument, if you've already blown up the auditorium.

Breaking the will to fight has been a key part of strategy and tactics.

Modern info war follows on this in the age of social media. I’ve noticed instead of trying to change what you believe (narratives!). it tries to break your capacity to even think.

Much of it isn’t primarily about getting you to believe the wrong thing. It’s about making you so overwhelmed that you can no longer figure out what’s real at all. When a society can’t reliably distinguish a genuine Iranian missile strike from an AI deepfake, it becomes paralyzed. It can’t mobilize. It can’t respond coherently.

Russian military theorists call this cognitive war: the systematic exhaustion of an adversary’s capacity for reliable judgment. The process follows a recognizable pattern:

First, universal skepticism sets in. People don’t start believing the wrong things. You stop believing anything related to it. They rendered opinionless.

Then, verification starts feeling futile. Why spend an hour fact-checking something when you can just not? People default to vibes or tribal alignment because it’s cognitively cheaper.

At the end, a shared reality collapses. When everyone is consuming a different flavor of slop, you stop arguing over opinions. You’re inhabiting entirely different factual universes.

Basically, you are striking the processing power, rather than the narrative power.

For the average person, its just simply too much. In the past, people had time to figure out if a story was real. AI breaks that.

The goal isn’t persuasion, since takes far more effort and can be countered. The primary objective is epistemic exhaustion. They want to exhaust you.

Which is raising the cost of finding truth so high that most people simply give up. You want people apathetic to the point of neutrality. Or apathetic enough that they’re open and primed for narratives you push.

And when a population gives up on truth, it becomes compliant in ways that serve whoever controls the narrative.

Observation 4: Create deliberate echo chambers

When citizens share no common facts, they can share no common purpose.

There’s a joke that’s gotten popular online: “Geopolitics is therapy.”

It sounds dismissive, but it points at something real. A lot of what I see on Twitter isn’t people trying to understand the conflict.

It’s mostly people seeking confirmation of what they already believe, reassurance that their side is right, and a sense of belonging in a confusing world.

Why?

When people feel helpless during a crisis, online argument becomes a substitute for agency:

Anger that might otherwise translate into real-world action gets discharged into the feed instead, functioning as a pressure valve that keeps people distracted and passive.

Citizens consuming incompatible realities cannot sustain unified will, making it nearly impossible to maintain national purpose when no one can agree on what’s actually happening.

Social validation from AI bots and algorithms constantly reinforces whatever people already believe, making them easier to nudge toward decisions that serve someone else’s interests.

Its basically choosing to eat fast food for every meal, rather than cooking healthy food. The fast food is full of sweets, fat, and oil. Confirmation is full of that biochemical rush that makes you feel good. Same reason why AI tools tell you you’re right. Info war is no different.

Hostile actors understand this. You don’t need to win a logical debate if you can hack a society’s emotional regulation. Social validation becomes a tool for nudging populations and their leaders into decisions that serve someone else’s interests.

The info war strategists’ ultimate aim is to divide and conquer your reality. It's to make sure you and your neighbors can never agree on anything long enough to act together. We all end up living in a balkanized reality, always craving confirmation to keep that reality going.

That fragmentation is the objective, not the side effect.

Observation 5: Information blackouts are a weapon, not a silence

Silence is not absence. Silence is preparation for monopoly of information.

We have become genuinely dependent on a constant stream of information to orient ourselves.

It is a structural condition after years of algorithmic conditioning, from social media and long isolation during COVID. If that stream gets cut off, we don’t patiently evaluate, we just panic. We’re addicted to the endless scroll of content, so addicts look for other replacements for that hit.

That’s a powerful biological response to manipulate.

Which is exactly why a blackout is such a powerful instrument. A well-executed blackout creates an absence of information, but it’s the foundation for a monopoly on it.

Once a population is cut off from external sources, the mechanics follow a consistent pattern:

The information vacuum triggers panic-filling, where people reach for anything available regardless of its accuracy or source.

The aggressor injects their own narrative into that vacuum with almost no resistance, since there is no counterpoint available.

The captive population, cut off from outside reference points, has no way to verify or challenge what they’re being told.

Within 3 days of the start of the conflict, Iran, Israel, and the Gulf States moved quickly to impose an information blackout. Iran and Israel quickly prevented the posting of strikes on their territory. While the UAE and Qatar threatened people with heavy fines if they posted strike footage.

Sometimes, they simply blocked them:

What happened next mattered more. OSINT accounts and regular people, desperate for real information and getting nothing, filled the void with conspiracy theories and fabricated content they made up themselves.

We’ve seen this playbook executed repeatedly across different conflicts and contexts. Isolate the people, then inject. The methodology is consistent because it works.They want to create either a passive or active monopoly on reality. It buys space for time.

Every major party to this conflict understands the same grim logic. If you want to disable an adversary, or manage your own population, you need to control who gets to make one.

Observation 6: Platform control is everything.

Great powers in the 21st century control platforms. It needs only to control what people believe to be real.

Here's the thing nobody wants to say out loud.

Everything I've described: the cheap fakes, the velocity, the cognitive overload, the echo chambers, the blackouts? None of that one of it works without a platform to run through.

Controlling that makes nations have to adapt, giving platform owners immersive political, economic, and social leverage. Nations have to work with them to be able to suppress narratives, and platform owners can set the terms. They own the battlefield.

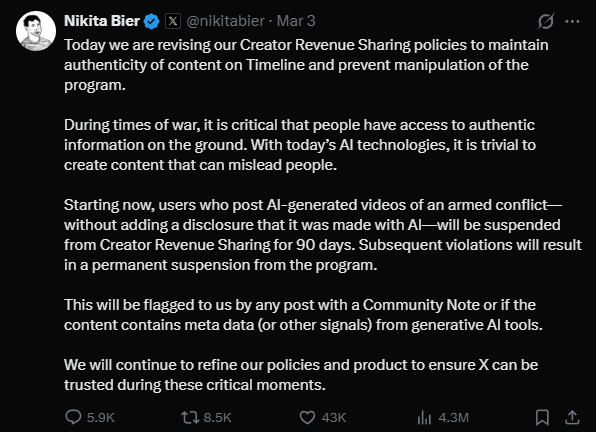

After the wave of AI slop media hit the ‘For You Tab’, Elon and Nikita Bier at X moved to demonetize accounts who promoted AI generated media:

Following the intense media reaction, X began to suspend several large accounts, and retrain the algorithm to limit impressions, visibility, and reach. In the end the platform owners hold the power. And they are influenced by the countries they reside in.

Now X decides which conspiracy theories trend, which fabrications gain traction, and which narratives stick. They have to change the association rules between accounts you interact with, content you look at, and also how often it’s shown to you. That is a power onto itself. And its immense.

That gives platform owners who manage recommendation systems, AI tools, and that data makes them a powerful non state actor. I think can even rival states.

Controlling a platform in the 21st century is a sovereignty question. A state that doesn’t control its information infrastructure is renting its narrative environment from whoever built the system.

Control the platform, control the narrative, control the user base.

Thanks for reading!

As always, thank you, paid subscribers, for supporting The People's Art of War. In the next few days, I'm releasing an article on how to think clearly in an age of AI-powered infowar…and how to capitalize on it.

Before then, if you have any questions or comments, feel free to drop them below. I'll address them in a follow-up piece

And if you haven’t already followed:

Spotify: Statescraft

YouTube: People’s Art of War

"Within 3 days of the start of the conflict, Iran, Israel, and the Gulf States moved quickly to impose an information blackout. Iran and Israel quickly prevented the posting of strikes on their territory. While the UAE and Qatar threatened people with heavy fines if they posted strike footage."---this is the part of the conflict that feels the least modern actually, this playbook was followed by even democratic states in the conflicts of the last century, one just have to think about how the combatants of WWI censored their media.

Information war has become a whole front in itself and our leaders do little or nothing about it.